It shouldn't be called optimizing

Optimizing means that you make something optimal, so why is it called that when a programmer makes their program run faster or use less memory? It can almost always run even faster or use even less memory, so this process is many good things but not really “optimization”.

I don’t know where it ultimately started, but it’s been called that since at least the 1950s. For example, check out this page from a 1956 Fortran manual:

The FREQUENCY statement permits the programmer to give his estimate, for each branch-point of control, of the frequencies with which the several branches will actually be executed in the object program. This information is used to optimise the use of index registers in the object program.

The authors felt no dissonance in saying that the compiler optimises with default settings but will optimise even more if you give it frequency information. If it was already optimal, how could it be optimised further?

They are using this word a lot.

The usage may have come from the field of linear programming, where true optimization is possible. Linear programming received a lot of effort during and around World War II as a way to allocate industrial capacity. A country that was better at linear programming could literally have more bullets to fling around. There was a breakthrough for linear programming in 1947—the Simplex Method—so the timing lines up.

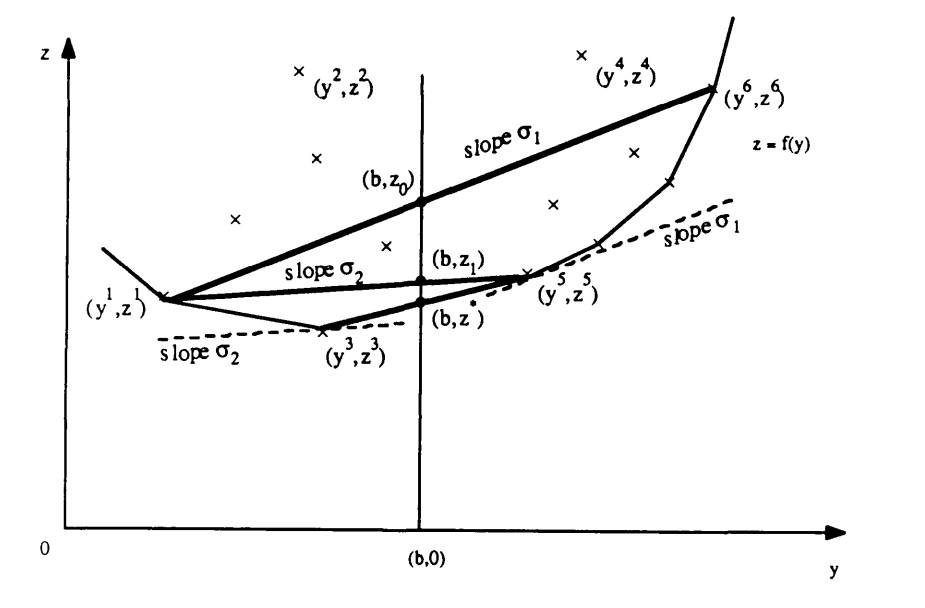

It’s interesting to see why linear programs can be optimized but not computer programs. With linear programs, the solution space for a problem ends up having a very convenient shape called a convex hull. Here is how George B. Dantzig visualized the idea for his readers.

This is a lot, but start by looking at the overall shape of the thing. There is a curved shape around the bottom—that’s part of the convex hull. The acceptible solutions are the ones that are on the vertical line in the middle—the one above the (b, 0) in the graph—but that are as low as possible while still be above the curved underbelly part.

The alorithm starts by choosing any two points on the underbelly part, and in this example, the chosen points are at (y1, z1) and (y6, z6). It then feels around the graph with a sequence of mathematical steps. A line is drawn between those two points, and the place it intersects the b line is found. That’s already a solution but not the best one. To find a better one, the point on the right is pulled down to as far as possible while staying on the right side of the b line, giving the point (y5, z5). Then the left point is pulled down in the same way, to (y3, z3). No more progress is possible, so to find the final answer, draw a line between these last to points and see where it crosses the b line.

Computer programs do not have this convenient outer shape to their behaviors, so there is no good way to feel around their outer shape, like this. Computer programs have loops, branches, and subroutines, and you cannot even know if a program will run forever or not, much less anything trickier like “can it run any faster?” or “can it use less memory?”.

The exact proof is too much to get into for this post, but it was one of a number of major impossibility results that happened around the same time as the rest of this story:

1874. Cantor proved that the real numbers cannot be counted, something I find a little weird.

1931. Gödel proved that not all math statements can be proven or disproven—his incompleteness theorems.

1936. Alan Turing proved that there is no computer program which can reliably decide whether another program will run forever or not. This is called the Halting Problem nowadays, but Turing called it the “circle-free” property, and it was his contribution to the awesomely named “Entscheidungsproblem” (decision problem).

Why is Turing naming things with German words? That is a rabbit hole of its own, but this was around the time that Albert Einstein was sharing his Gedankenexperiments (thought experiments), so maybe it felt right to name these other fundamental problems in German as well. I have no real idea but get tickled at the mental image. These folks were working on very fundamental ideas and were later swept into the war effort, and here they are babbling long German gedorkenwords to each other.

At any rate, Turing showed that you cannot even tell if a computer program will stop or not, so almost anything you would like to “optimize” about a program cannot be.

As soon as the program has a part that goes, “do this thing, then do the other thing”, there is no way to know if “do this thing” will finish, so “do the other thing” may or may not happen. Most of the ways to improve a program would need to know this information, so most of the time, not only is a program not optimized, it often simply can’t be.

We should probably call it “improving” a program rather than optimizing, but I guess that doesn’t have the same ring to it.